Being Sassy to Socrates

Socrates is arguably one of the most well-known classical Greek philosophers and has influenced the thoughts of others that followed him, most notably, Plato, one of his most renowned students. Socrates and Plato had many intriguing conversations, and one of the most famous conversations that circulated around the Internet was about a very popular subject:

“One day, Plato asked Socrates What love is. Socrates said: I ask you to go across this rice field, pick up and bring back the biggest and best ear of wheat, but remember one thing, you cannot go back, and you just have one chance.

Then Plato did so, but he came back with nothing after a long time. Socrates asked him why?

Plato answered: I once saw some very big and good wheat when I walked through the field, but I was always thinking that maybe there would some bigger and better ones, so I just passed by; but what I saw later is not better than before, so I had nothing at last.

Socrates said this is love.”[1]

Source: [1]

Unfortunately, not much of a philosopher myself, I cannot offer much on the anecdote, and I can neither agree nor disagree with Socrates’ dissertation of love with Plato. However, given that Plato was also a bit of a mathematician himself upon influencing Pythagoras (yes the one named for the Pythagorean Theorem), and having the “Platonic Solids” named after him, I cannot help but think that Plato had an ever-so-slight inkling of being sassy with his philosophy teacher. The alternative conversation might go something like this:

Socrates: And so Plato, do you now see what love is?

Plato: Well, perhaps love is a mysterious subject, but I’m pretty sure I ended up with the biggest ear of wheat after all that walking, so that’s a nice consolation.

Socrates: WHAT??? It was a huge wheat field, how on Earth did you managed to get the biggest ear?

Plato: Well not exactly the biggest, but I have one-to-two odds of ending up with the biggest ear.

Socrates: You have got to be kidding me. Let’s say there are 1000 ears of wheat in the path, and remember:

- You cannot backtrack.

- Once you’ve picked the ear of wheat, that’s it. You have got only one chance.

So tell me, my dear Plato, if you randomly picked up an ear, you have only gotten a 1/1000 chance of getting the biggest one. Where is your one-to-two odds coming from?

Plato: Sorry teacher. I lied.

Socrates: Whew.

Plato: I actually have a better than one-to-two odds, implying that I have a 33% chance of getting the jackpot. I actually have an approximately 37% chance of picking out the cream of the crop (no pun intended), and this figure is the same even if we have 10000 ears, 100,000 ears, 1 million ears…In fact, the more ears there are, the more certain that I will have a 37% chance of getting the prize!

Socrates: …

Okay probably the conversation wouldn’t have happened, as the earliest the study of probability can be traced back is around the 8th century with Arab mathematicians. Nonetheless, Plato is onto something here. Believe it or not, the seemingly random nature of the exercise of picking out the best ear of wheat, with no backtracking nor second chances, has an optimal strategy that produces a better than one-to-two odds of getting the prize, even as the number of ears goes to infinity.

The Intuition

Unlike my previous blog about the Coin Rotation Paradox where I first provided the math, then applied intuition, I will reverse steps here to provide some context into the math that we will be exploring. In order to provide the context, let me formally define the problem.

Formal Problem Statement

Let there be N distinct objects, each with an associated score that can be compared on the real-number line (e.g. object: ear of wheat; score: size). What is an optimal strategy that maximizes the probability of picking out the one object with the highest score among the N objects subject to the following conditions:

- You may only examine one object at a time.

- Once you have examined that object, you must make a decision to accept it or move on.

- If you choose to accept, then the process stops. You may not examine any more objects.

- If you choose to move on, then you cannot revisit this object later or any of the objects previously examined.

You may assume that each object’s scores are uniformly randomly distributed across a range from zero to positive infinity, and each score is independent of the others and not related to each other in any way.

The Strategy

Needless to say, picking out the object with the highest score is a daunting task if you only have one shot and you cannot revisit previously examined ones or make any assumptions regarding the correlations among the scores. If you randomly pick out an object, you will have a 1/N chance of picking out the best one, which goes to 0 as N goes to infinity. Can we do better?

The answer is yes, and the intuitive plan is to remember that the more objects you examine, the better sense of the score range you will obtain. Remember, the problem’s conditions did not say that you cannot use the scores previously examined in your judgment, and simply states that the scores among the objects are not related in any way and are distributed in an equally likely fashion. Even though you cannot look at a single score and judge its value, you can recall the previous scores and compare those with the one you are looking at. Doing so will provide a gauge that will increase your chance of picking out the best object. For example, say you are looking at 10 objects. You passed on the first three objects, and look at the fourth one, and its score is not as high as the second object. You can therefore immediately reject this object as you recall seeing one with a higher score, so the fourth object cannot be the best one. Having this extra information already improved your chances over picking an object randomly. In fact, the more objects you can examine, (e.g. with a large N), the better “samples” you can construct so that the subsequent examinations will be more effectively in weeding out the objects that for sure cannot be the best one, as their scores will be lower than that of at least one objects in your “sample” space. This strategy is optimal as it does not decrease the probability of finding the best object even as the number of objects, N, goes to infinity, because as N gets bigger, our sample set size can also grow accordingly so that we have more scores to gauge the best object picked from the non-sample set.

So the optimal strategy will be something like this:

Given N objects to examine, designate the first k of the N objects as “samples” and do not accept any of them but remember their scores. Starting with the (k+1)st object all the way to the Nth object, as soon as you come across one with a score higher than the highest score in the sample set, accept it. If you did not come across any, then take the last object.

If you select the appropriate value for k, then you will have the optimal strategy and have a fixed probability of success (which is around 36.8% and approaches this figure from below more and more as N gets larger and larger).

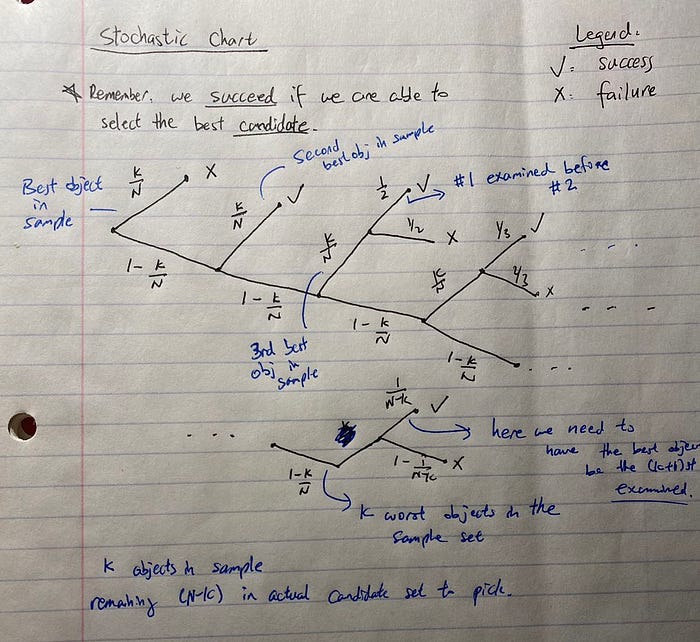

Finding k is not that straightforward because there is a trade-off in the size of k. If k is too small, then we don’t have a good score as the baseline to gauge the scores we see in the non-sample set. If k is too large, on the other hand, then it will be more likely that we have passed on the best object. So the optimal k is somewhere in between. How to find it? Math! More specifically: using a stochastic chart.

The Math

Our goal now is to find that “k”, i.e. the optimal sample set size for using as a gauge to find the object with the highest score. In order to do so, we must first visualize the process of picking out the object if we somehow have a definite value for k. If we know what k is, then starting with the (k+1)st object, we will have a k/N chance of failure because there is a k/N chance that the best object is in the sample set and the set size is k. Therefore, we would have a (1-k/N) chance of having a chance to pick out the best object.

If fall into the latter fortunate category of the best object not in the sample set, what would further help? Well, for starters, if the second best object is in the sample set. This, combined with the fact that the best object is in the non-sample set, means that we will definitely come across the best object while rejecting all others that we examine before getting to the best object.

If your head doesn’t hurt (and I apologize in advance if it does), bear with me. Remember everything is conditioned on the fact that the best object is in the non-sample set. If it is in the sample set, then that means we’ve passed it on and we cannot succeed in any case. As long as the best object is in the non-sample set, we have a chance of picking it out. Doing so depends on two factors:

- The quality of objects in the sample set

- The order of objects being examined in the non-sample set

If the quality of the sample set is good enough, such as having the second-best object in there, then we wouldn’t even care about the order of objects in the non-sample set, because we will reject all but the best object, thereby succeeding in our quest. Unfortunately, order does matter if we don’t have the second-best object in the sample set. Say we have the third best object in the sample set but the first two in the non-sample set. If we examine the second-best object before the best, then we will fail in our quest, because the second best object would, by definition, have a higher score than the third, and we only got one chance at picking an object. We would pick the second-best and be done with it, not knowing that a better one lies ahead.

The same line of thinking extends with #4 in sample set but #1–3 in non-sample set. In that case we must have #1 beating out #2 and #3 in order, as otherwise we would pick an inferior object. The worst case scenario would be that we have the k worst objects in the sample set of size k, in which case we must have the best object being the (k+1)st object examined, otherwise we pick out an inferior object as any object in the non-sample set beat out the best object in the sample in the worst case scenario.

This line of thinking leads to the following stochastic chart that Plato probably thought about as his teacher rambled about love.

Apologies for the messy drawings. I’m sure there are software out there that can draw neater charts, but I am going with my notebook here.

I should mention here that we are assuming that there is nothing special about the ordering of the objects being examined. That is, any permutation of the objects (in terms of examination order) has equal probability of occurring. In such cases the probability of the best object appearing before the second-best object in a set is, as you would imagine, 1/2, as out of all the available permutations, half of them will have #1 examined before #2 and the other half the opposite case. The same is true for three objects: the probability of having #1 ahead of #2 and #3 is 1/3, as the other two scenarios would feature #1 in the other two slots (2–1–3 and 2–3–1) equally likely.

From the stochastic process chart, the probability of picking out the best object is just the sum of all the “✓” paths. Each of those paths have segments which represent conditional probabilities that one multiplies together to obtain the paths probability.

So for a finite value of N, we would have the above expression for the probability of success given some k between 0 and N, and we would check each k and pick the k that obtains the highest probability.

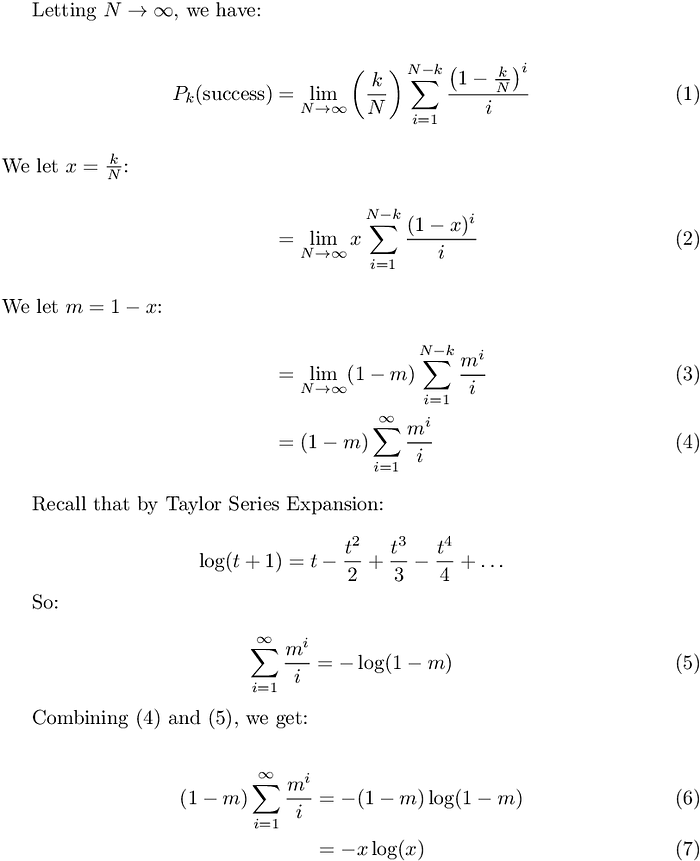

However, we are interested in the case when N is large, as random picking would have the probability of success going to 0 as N goes to infinity. When N goes to infinity, for the above expression, we can derive as follows:

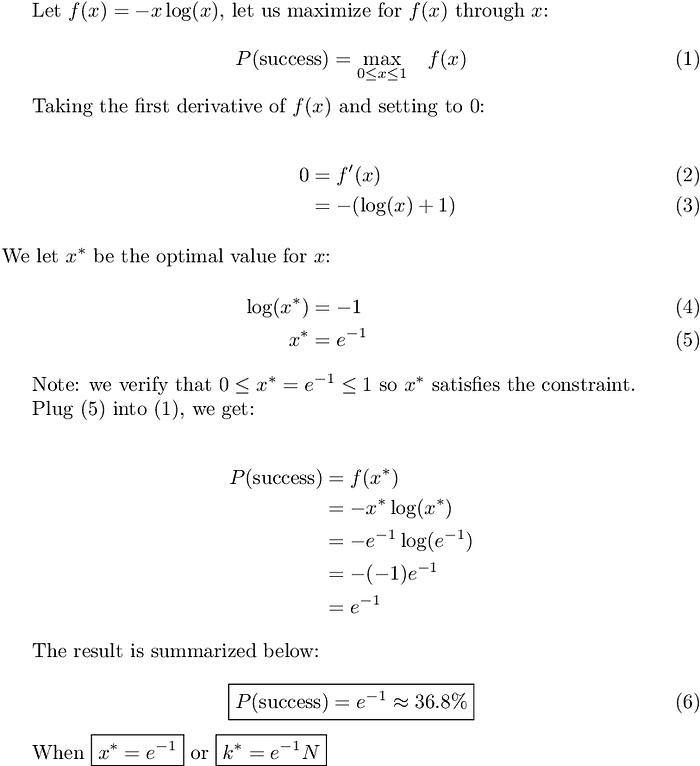

Now continuing with our optimizations (the equation numbers reset below).

If you are curious about how the probability chances as you vary x (the proportion of sample set size to the entire set size), the graph below illustrates the relationship as x various between 0 and 1. The graph passes the sanity check: if x = 0, we have no sample size, so we are just picking randomly and of course the probability of success = 1/N which goes to 0 as N goes to infinity. When x = 1, the entire set is the sample set, and therefore we cannot pick any object as the non-sample set is empty and hence the probability of success is again 0. As x varies between 0 and 1, the probability of finding the best object also varies from 0 and 1 and attains the maximum value of e^-1 as x = e^-1.

Takeaways

The results for N going to infinity can be interpreted as follows: for a large N (say N = 1000), we can let k = round(e^-1 * N) as the sample size (approximately 36.8% of the enter set size), and starting with the (k+1)st object, pick the first one encountered with a higher score than any of the objects in the sample set. Doing so maximizes the probability of picking out the best object, at exactly e^-1, which is roughly 36.8%.

So with a field of 1000 ears of wheat, Plato can scan through the first 368 ears and remember the size of the biggest one encountered. Then, starting with the 369th ear, as soon as he encounters an ear that is larger than the biggest encountered from the first 368, he picks it. Doing so will have him at approximately 36.8% chance of picking out the best ear of wheat. This number does not change when N gets bigger. If there are 10,000 ears of wheat, then Plato by letting the first 3679 ears as the sample set and performing the same strategy will still yield the optimal probability at getting the best ear of wheat: at 36.8%.

Getting sassy with Socrates!

Originally published at https://www.davidyding.com.